(cross-posted from The Blog @ Graeme’s Place)

I’ve been a bit quiet on the blogging side of things for a while, and in my defence I’ve been pretty heads-down working as a vendor for Microsoft as the lead author for a couple of new courses on SQL Server 2012 data warehousing and BI (courses 10777A and 10778A if you’re interested). As part of this work, I’ve been exploring the new data cleansing capabilities in SQL Server 2012 Data Quality Services (DQS). This article is a simple walkthrough of how to use DQS to cleanse data as part of an Enterprise Information Management (EIM) or Extract, Transform, and Load (ETL) solution.

So, what is data cleansing all about then? Well, most people involved in building or managing data-driven applications and BI solutions will have come across the problem of inconsistent or invalid data values for columns (or “domains”) that are used for business analysis. For example, let’s suppose your database stores customer data, including the customer’s address; and you you want to count customer sales by country. When customers or sales employees enter customer data into the system, it’s perfectly possible (and actually quite likely given a large enough volume of customers) that some values will be either entered incorrectly (for example “Unted States” instead of “United States”) or inconsistently (for example, some customers may enter “United States”, some others may enter “USA”, and others still may enter “America”). When you try to aggregate sales, you’ll end up with inaccurate counts because there are several values in use for the same country.

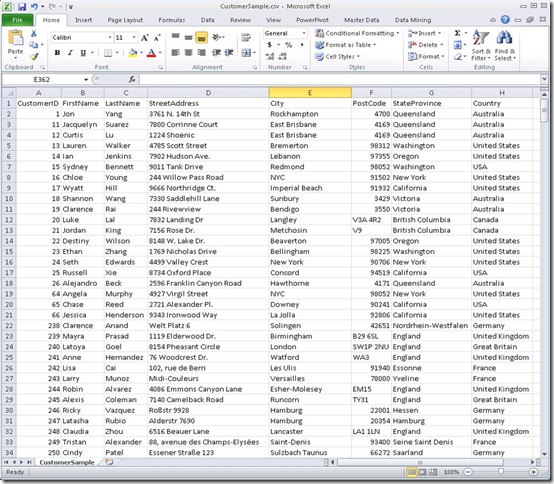

Here’s an Excel workbook containing a subset of data extracted from a SQL Server database table to show some typical data quality problems.

Note that the data contains a number of problems, including:

- The City column contains “New York” and “NYC” for New York City.

- The Country column contains “United States” and “USA” for the US.

- The Country column also contains “United Kingdom” and “Great Britain” for the UK.

DQS enables you to address this problem by cleansing the data based on a known set of values and rules for the key domains (columns) that exist in your datasets. The way that DQS does this is by enabling you to create and maintain a knowledge base that contains the known valid values for a related set of domains, along with validation rules (for example, an EmailAddress value must include a “@” character) and common synonyms can be corrected to a leading value (for example, by correcting “USA” and “America” to the leading value “United States”). After you have created a knowledge base, you can use it to cleanse any data that includes the same domains (so for example, if you create a knowledge base for geographical domains such as City, State, and Country, you can use it to cleanse any data that includes these fields – such as customer data or employee address data. SQL Server 2012 includes the Data Quality Services Client tool (shown below), which you can use to create, maintain, and use DQS knowledge bases.

When you create a new knowledge base, you can do so from scratch, or you can use an existing knowledge base as a starting point. SQL Server 2012 ships with a pre-existing knowledge base for US-based demographic data named DQS Data, and in this example, I’ll use it as the basis for my own CustomerKB knowledge base as shown below.

The DQS Data knowledge base includes a number of pre-defined domains, as shown in the image above. I only need some of these domains, and I’ll need to add some additional ones that are specific to my own data; so I’ve initially selected the Domain Management activity as I create the CustomerKB knowledge base. I only intend to use the Country/Region, US – Last Name, and US – State domains from the DQS Data knowledge base, so I’ll delete the others. The domains I’m retaining contain official values for country and US state names, and common last names (surnames) based on US demographic data such as the 2000 US census.

Since my customer data includes records for customers all over the world, I’ll rename the domains in my knowledge base to remove the “US” prefix. I’ll also add a new domain named City so that I can validate city names in the data.

Note that I can select each domain and view the known values that are currently defined in the knowledge base as shown below. The City domain has no known values (because I’ve just created it), and the others have inherited values from the DQS Data knowledge base. The image below shows the known values for the Country/Region domain. Note that the knowledge base defines leading values for each country (such as “Afghanistan”) and synonyms that, while valid in their own right, should be corrected to the leading value to ensure consistency.

I’ve now completed my initial knowledge base, so I’m ready to finish the domain management activity. Clicking Finish produces a prompt to publish the knowledge base as shown below, but before I’m ready to use it I want to populate the known values for the City domain from my existing data by performing some knowledge discovery; so I’ll click No.

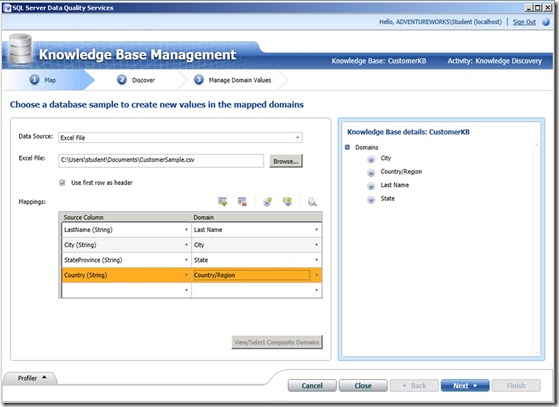

Knowledge Discovery is an activity in which you connect to a data source and map fields in the source to domains in the knowledge base. DQS can then use the data source to discover new values for the domains defined in the knowledge base. The first step in this process is to open the knowledge base for the Knowledge Discovery activity as shown here. Note that the activity is performed using a wizard interface, with a sequence of steps.

After opening the knowledge base, I need to select a data source (I’m using the Excel workbook we saw earlier), and map the columns in the data source to the domains in the knowledge base as shown below. Note that the data source can include columns that are not mapped to domains, and does not need to include a column for every domain in the knowledge base. However, only the mapped domains will be included in the knowledge discovery process.

On the next page, I can start the data discovery analysis. DQS will read the source data and identify new values for the domains in the knowledge base, as shown here.

On the final page of the wizard, you can view the values that have been discovered for each domain. In this example, the values discovered for the City domain include New York and NYC, as shown below. I can identify these as synonyms by selecting them both and clicking the Set selected domain values as synonyms button.

The value I selected first becomes the leading value, as shown here.

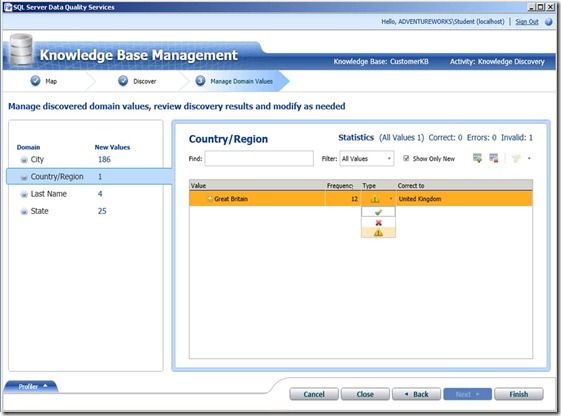

For the Country/Region domain, DQS has discovered a new “Great Britain” value. I can mark this as invalid and specify an existing value to which it should be corrected (in this case, “United Kingdom”).

Clearing the Show Only New checkbox reveals the values that already existed before knowledge discovery, and I can see that “Great Britain” is now under the “United Kingdom” leading value. I can also see that there were 151 instances of the existing “United States” value found, along with a further 42 instances of “USA”, which was already specified as a synonym for “United States”.

Now I’m ready to finish the knowledge discovery activity and publish the knowledge base.

After you have published a knowledge base, you can use it to cleanse data from any data source containing columns that can be mapped to the domains defined in it. The simplest way to do this is to create a new data quality project based on the knowledge base and specify the Cleansing activity, as shown here.

Again, the activity takes the form of a wizard with sequential steps. The first step is to map the columns in the data source to the domains in the knowledge base, just as I did when performing the knowledge discovery activity previously; only this time I’m using the full Customers table in my CustomerDB SQL Server database instead of the sample data I had extracted to Excel.

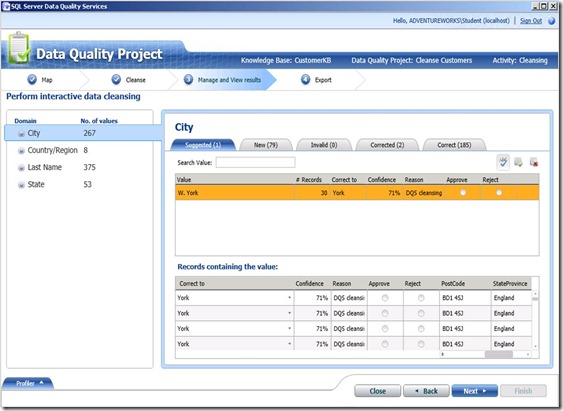

Next, I run the cleansing process and DQS applies the knowledge base to the source data to identify corrected and suggested values. Corrected values are corrections DQS makes to the data based on known rules and synonyms. Suggested values are further possible corrections or new values that are generated based on a number of data quality heuristics that DQS uses when analyzing data.

On the next page, on the Suggestions tab for each domain, I can view the suggestions identified by DQS. Here, DQS has identified a City domain value of "W. York”, which is sufficiently similar to the known value “York” for a correction to be suggested. Note that I can select the value and view the records that contain it to verify that “W. York” is commonly being used to denote “York” in England (as opposed for example, to “New York” in the United States). I can then choose to approve or reject individual instances of the correction, or accept/reject the suggestion that “W. York” should be considered a synonym of “York” (if I approve the suggestion) or added as a new known value in its own right (if I reject the suggestion).

On the New tab, I can view the new values that were discovered for the domain. In this case, a number of new values were identified for the City domain, including Bracknell in England.

On the Corrected tab, I can view the values that were corrected based on pre-existing known synonyms or suggestions that I have approved.

After reviewing the results of the cleansing activity, I can export the cleansed data to a SQL Server database table, and .csv file, or an Excel workbook. Note that I can choose to export just the cleansed data values or I can include the cleansing information for further analysis.

The exported results are shown in the following image. Note that the results include all of the source columns, and that for each of the columns that was mapped to a domain there are five columns in the results: The source value, the output value, the reason for any corrections, the level of confidence (between 0 and 1) for the correction, and the status of the column (correct or corrected).

By creating a data cleansing project, a business user who understands the data domains can act as a “data steward” and enforce the quality of the data in application databases or analytical and reporting systems. Additionally, when you are confident in the ability of your knowledge base to cleanse data, you can incorporate DQS data cleansing into a SQL Server Integration Services (SSIS) data flow that extracts data from a source as part of an ETL process for data warehousing or EIM. The following image shows an SSIS data flow that includes the DQS Cleansing transformation.

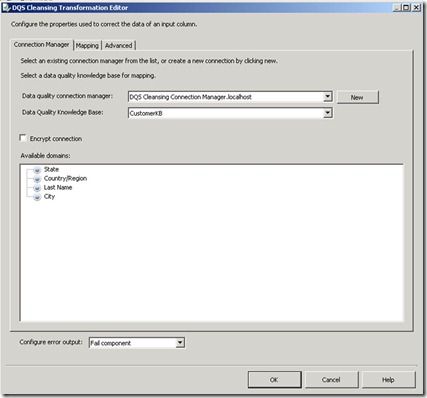

In this example, the CustomerDB data source uses an OLE DB connection to extract data from the Customers table in SQL Server. The DQS Cleansing transformation is then configured to use the CustomerKB knowledge base and map the appropriate columns from the data source to domains for cleansing, as shown here.

The Staging DB destination uses an OLE DB connection to load data from the data flow into a staging table as part of an ETL process for a data warehousing solution. The output columns for the mapped domains are used to load the cleansed values into the staging table, as shown here.

Running the SSIS package extracts the source data, applies the DQS knowledge base to cleanse the mapped columns, and loads the cleansed data into the staging database as shown here.

This walkthrough provides a simple example of how you can use DQS to cleanse data and improve data quality for reporting and analysis. There are a number of additional features of DQS that are not shown here, including the ability to define composite domains that consist of multiple columns and the ability to include external reference cleansing data from the Windows Azure Data Market in your knowledge base (for example to apply post code validation and correction rules based on standard data from a postal service authority). You can learn more about using DQS to cleanse data by attending course 10777A: Implementing a Data Warehouse with SQL Server 2012.

No comments:

Post a Comment